Essential Voice Security Measures For Enterprise AI In 2026

Voice AI has become critical infrastructure. The technology now powers healthcare documentation, financial services, and contact center automation. The global voice AI market is projected to reach $32.47 billion by 2030.

This growth brings security from a procurement checkbox to a board-level concern. Voice data is fundamentally different from text. It contains biometric identifiers, unstructured personal information, and content that is harder to monitor and filter. When a breach happens, the damage extends far beyond regulatory fines.

This guide breaks down the essential voice security measures every enterprise needs to implement.

Why Voice AI Security Demands A Different Approach

Voice data is not like other data. When someone speaks, they share more than just words. Voice recordings capture biometric identifiers that can uniquely identify individuals. They contain unstructured personal information (names, addresses, health details, financial data) that flows naturally in conversation. Unlike typed input, voice is harder to scan and filter in real time.

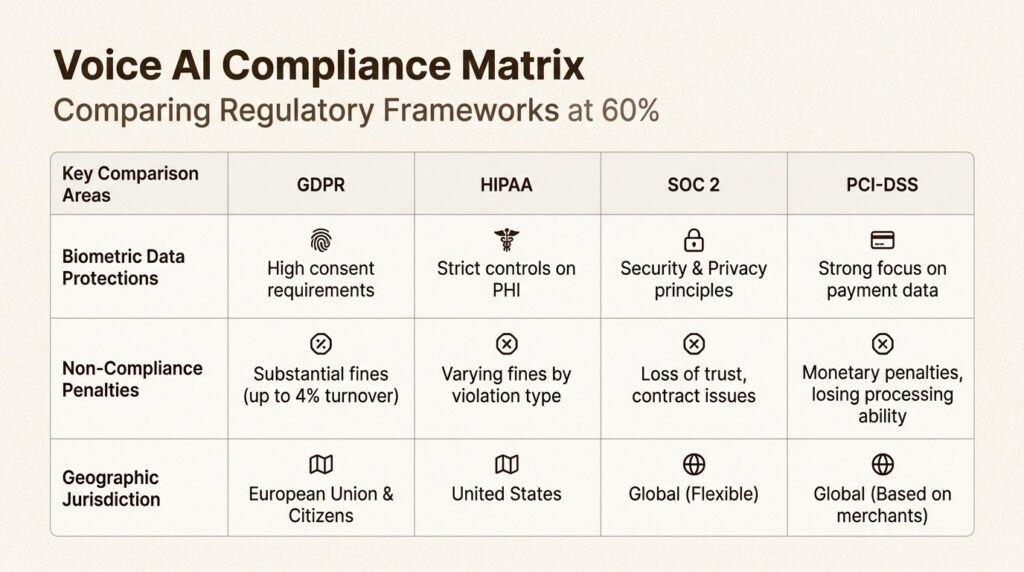

The regulatory landscape reflects this uniqueness. Under General Data Protection Regulation (GDPR), voice biometrics qualify as special category data requiring explicit consent. The Federal Communications Commission (FCC) has clarified that AI-generated voices require prior written consent under the Telephone Consumer Protection Act. Illinois’ Biometric Information Privacy Act (BIPA) imposes strict requirements on voiceprint collection.

The cost of getting this wrong is substantial. IBM’s 2024 Cost of a Data Breach Report found the average breach costs $4.88 million. For AI-related breaches specifically, that figure rises to $4.9 million. According to Salesforce research, 73% of business leaders worry that generative AI may introduce new security vulnerabilities. Pindrop’s 2025 Voice Intelligence and Security Report estimates $12.5 billion was lost to contact center fraud in 2024 alone.

Traditional security models were built for text and structured data. They do not account for the unique risks of voice: biometric identification, adversarial audio attacks, and the unstructured nature of spoken content. Voice AI security requires a fundamentally different architecture.

Core Security Architecture For Voice AI Systems

Encryption In Transit And At Rest

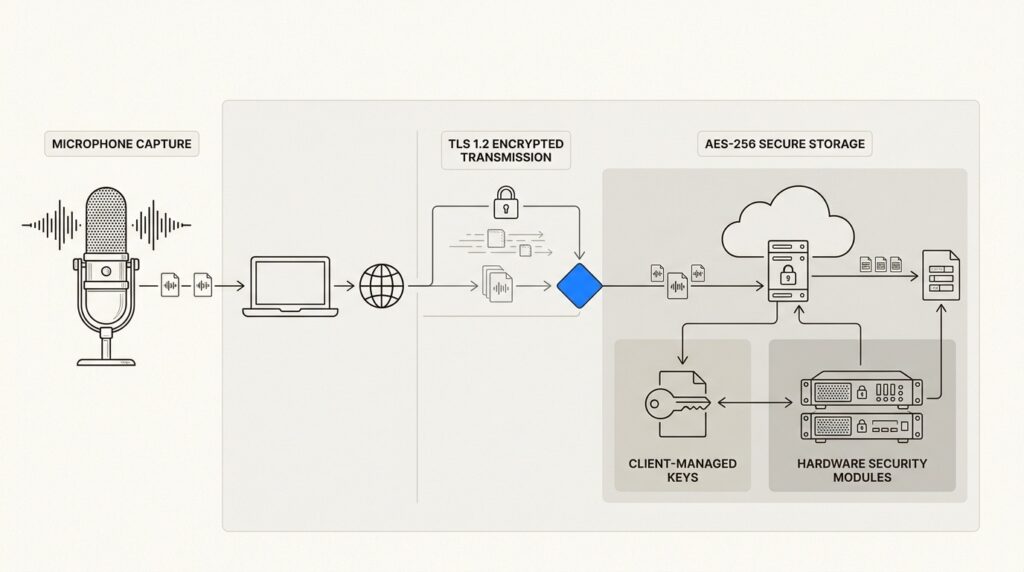

Every piece of voice data should be encrypted throughout its lifecycle. For voice streams in transit, this means TLS 1.2 or higher. For stored recordings and transcripts, AES-256 encryption is the standard.

End-to-end encryption (E2EE) ensures voice audio and transcripts remain encrypted from capture until they reach a trusted endpoint. This prevents intermediaries from accessing plaintext even if network segments are compromised. Implementing E2EE requires careful key management. Hardware Security Modules (HSMs) provide tamper-resistant storage for encryption keys in high-security environments.

At Shunya Labs, data is encrypted in transit and at rest: TLS for every connection, AES-256 for storage, with keys managed in your cloud, giving enterprises full control over their encryption infrastructure.

Authentication And Access Control

Not everyone needs access to everything. Role-based access control (RBAC) assigns permissions based on job functions. A support technician might only need access to basic transcript logs. An administrator requires broader access for auditing. The principle of least privilege reduces the chance of internal misuse or accidental exposure.

Multi-factor authentication (MFA) should protect all administrative access to voice AI systems. Common second factors include time-based one-time passwords (TOTP), push notifications, and hardware tokens. Voice-only authentication should never be the sole MFA mechanism because synthetic voice attacks can spoof single-factor voice prompts.

For voice biometric systems, liveness detection is essential. This technology verifies that a presented voice sample originates from a live human rather than a replayed recording or synthetic audio. Active liveness requires user interaction (speaking a randomized phrase). Passive liveness analyzes audio characteristics for natural inconsistencies.

Network And Infrastructure Security

Voice AI systems should operate within secure network boundaries. IP allowlisting restricts access to known addresses. VPN requirements ensure encrypted tunnels for remote access. Webhook signature verification prevents unauthorized systems from sending data to your endpoints.

Geographic redundancy across data centers ensures availability even during regional outages. Automatic failover mechanisms maintain service continuity. Real-time monitoring and anomaly detection catch unusual access patterns, failed authentication attempts, and unexpected changes in data routing.

Compliance Frameworks Every Enterprise Must Address

Gdpr And Data Privacy Regulations

The General Data Protection Regulation treats voice data as personal data. When used for identification, voice biometrics become special category data under Article 9, requiring explicit consent and enhanced protections.

Enterprises must establish a lawful basis for processing voice recordings. This could be explicit consent with opt-in mechanisms, legitimate interest with documented balancing tests, or contractual necessity. Data Protection Impact Assessments are required when processing voice at scale.

Users have the right to access their voice data, request corrections, and demand erasure. Organizations must respond to these requests within GDPR’s 30-day timeline. This requires auditable workflows for locating and deleting specific voice recordings across storage systems.

Industry-Specific Compliance

Healthcare organizations must comply with HIPAA’s Security Rule for electronic protected health information (e-PHI). Voice recordings containing PHI must be encrypted at rest and in transit. Business Associate Agreements (BAAs) are required with voice AI vendors. The HHS Office for Civil Rights provides educational guidance on implementing these safeguards.

For payment card data, PCI-DSS requires automatic redaction and tokenization. Voice AI systems handling transactions must detect and mask card numbers in real time.

SOC 2 Type II certification demonstrates that a voice AI vendor maintains comprehensive security controls over time. ISO 27001 certification indicates a robust information security management system.

For enterprises operating in India, the Digital Personal Data Protection Act 2023 establishes consent requirements and data fiduciary obligations. Voice data qualifies as personal data under the Act. Significant data fiduciaries face additional compliance obligations including Data Protection Officer appointment.

Telecommunications And Biometric Laws

The FCC confirmed in 2024 that AI-generated voices require prior express written consent under the Telephone Consumer Protection Act (TCPA). Violations carry statutory damages up to $1,500 per call.

Illinois’ Biometric Information Privacy Act (BIPA) requires written consent before collecting voiceprints, publicly available retention schedules, and prohibits selling biometric data. Private individuals can sue for violations, making compliance essential.

California’s CCPA and CPRA grant consumers rights to know what voice data is collected, opt out of sale, and request deletion. Similar laws are spreading across US states.

Emerging Threats And How To Counter Them

Deepfake And Synthetic Voice Attacks

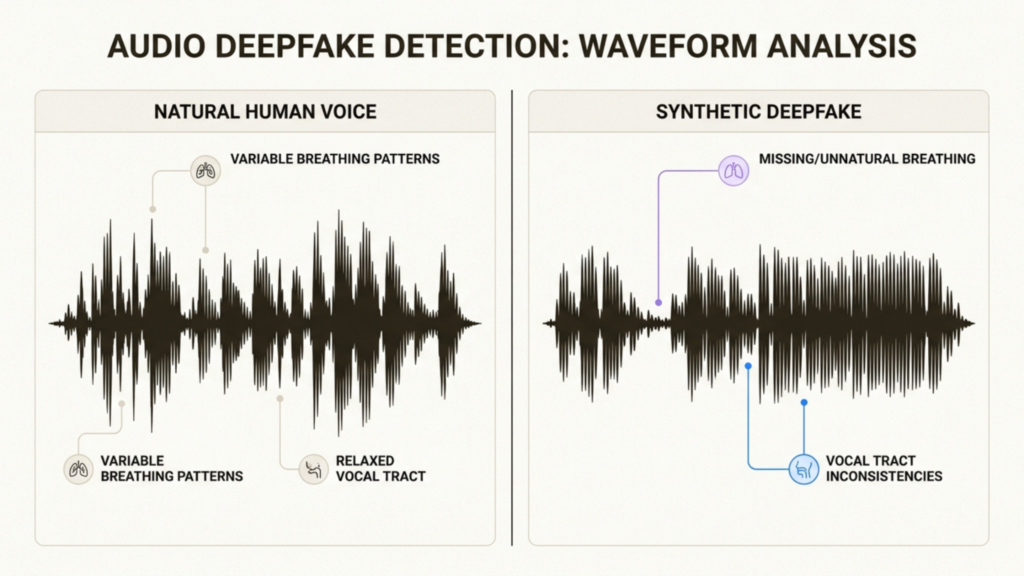

Deepfake fraud attempts rose over 1,300% in 2024, jumping from an average of one per month to seven per day according to Pindrop research. Attackers use minimal audio samples to create convincing voice replicas that bypass traditional authentication.

Anti-spoofing algorithms analyze voice characteristics difficult to replicate: breathing patterns, vocal tract characteristics, and other biometric markers. Multi-layered authentication combining voice with additional factors creates more robust protection.

Adversarial Audio Attacks

Researchers have demonstrated that attackers can craft audio containing hidden commands inaudible to humans but recognized by AI systems. The “DolphinAttack” technique uses ultrasonic frequencies to issue commands without victims’ knowledge.

Defending against these attacks requires adversarial training of voice models, input preprocessing to detect anomalies, and anomaly scoring systems that flag suspicious audio patterns.

Vishing and social engineering

Voice-based phishing (vishing) targets employees with calls impersonating banks, tech support, or colleagues. With generative AI, these attacks sound increasingly authentic.

Defense requires employee training on verification protocols: never sharing sensitive information without confirming identity through official channels, hanging up and calling back at verified numbers, and reporting suspicious calls immediately.

Deployment Strategies For Maximum Security

Cloud Deployment Security

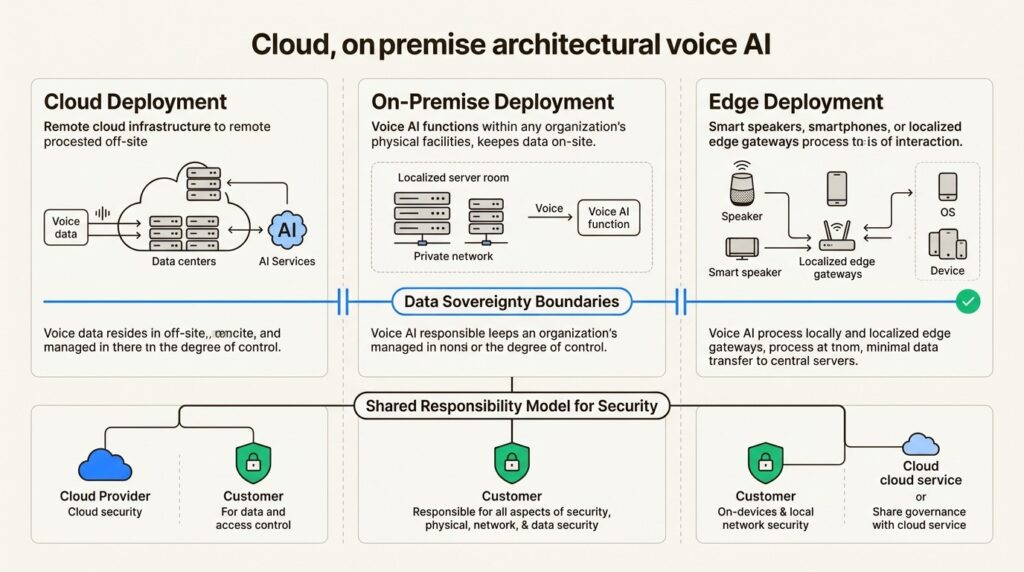

Cloud deployments follow a shared responsibility model. The provider secures the infrastructure. The customer secures their data and configurations. Enterprises must verify cloud providers maintain SOC 2 Type II, ISO 27001, and relevant compliance certifications.

Data residency controls ensure voice data remains in specified geographic regions. This is critical for compliance with data sovereignty requirements in the EU, India, and other jurisdictions.

On-Premise And Edge Deployment

For maximum control, on-premise deployments keep voice data within enterprise infrastructure. Air-gapped environments provide the highest security for sensitive applications. Edge processing handles voice data locally on devices, reducing exposure during transmission.

On-device processing is especially valuable in healthcare, finance, and government applications where data cannot leave the premises. Latency is reduced and compliance simplified when voice processing happens at the edge.

Hybrid And Multi-Cloud Considerations

Many enterprises use hybrid approaches combining cloud and on-premise resources. Consistent security policies must apply across all environments. API security becomes critical as voice data flows between systems. Centralized monitoring provides visibility into the entire voice AI infrastructure.

At Shunya Labs, we offer deployment flexibility to match your security requirements: cloud API for rapid deployment, local deployment for data sovereignty, and on-premise/edge options for maximum control.

Building Your Voice Ai Security Roadmap

Implementing voice AI security is a phased process:

Step 1: Inventory voice data flows. Map where voice data is captured, processed, stored, and transmitted. Identify all systems that touch voice recordings.

Step 2: Map compliance requirements. Determine which regulations apply based on your industry and geographic presence. Healthcare needs HIPAA. EU operations need GDPR. Contact centers need TCPA compliance.

Step 3: Implement encryption and access controls. Deploy TLS 1.2+ for transit, AES-256 for storage, RBAC for access management, and MFA for administrative accounts.

Step 4: Deploy monitoring and anomaly detection. Implement logging, real-time monitoring, and alerting for suspicious access patterns.

Step 5: Establish incident response procedures. Create playbooks for voice data breaches. Define notification timelines and remediation steps.

Step 6: Regular audits and penetration testing. Schedule periodic security assessments. Test defenses against emerging threats like deepfakes and adversarial audio.

Secure Your Voice AI With Shunya Labs

Voice AI security is not optional. The regulatory requirements are clear. The threat landscape is evolving. The cost of failure is measured in millions of dollars and irreparable reputation damage.

At Shunya Labs, we built enterprise security from day one. Our platform offers:

- SOC 2 Type II, ISO 27001, and HIPAA compliance for regulated industries

- Two-sided encryption with TLS in transit and AES-256 at rest, plus client-managed keys

- Deployment flexibility across cloud, on-premise, and edge environments

- 32+ Indic language support with code-switching capabilities for regional compliance

- FHIR and HL7 structured outputs for healthcare integration

Whether you are processing millions of customer service calls or transcribing sensitive medical consultations, your voice data deserves enterprise-grade protection.

Ready to secure your voice AI deployment? Contact our team to discuss your security requirements and see how Shunya Labs can help you implement voice AI on your terms.