Why Real-Time Speech Translation Is Becoming Essential For Global Business

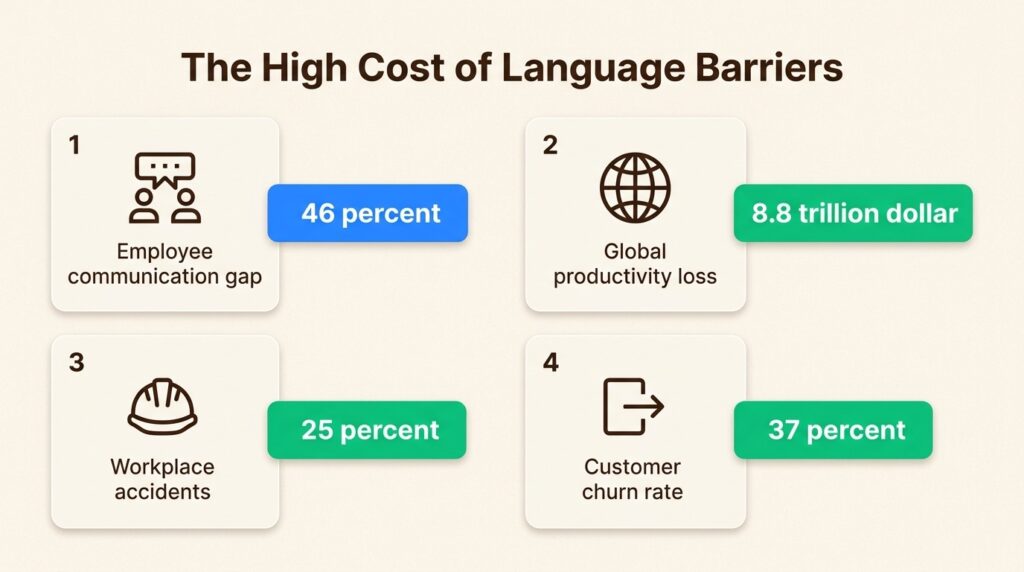

Language barriers are expensive. In a global survey by Rosetta Stone and Forbes Insights, 67% of executives said miscommunication due to language barriers creates inefficiencies in their organizations. Meanwhile, 46% of employees at global companies can’t communicate effectively because of language issues, and 40% report being less productive as a result.

The financial impact is staggering. Gallup estimates that disengaged employees, often struggling with communication gaps, contribute to an $8.8 trillion loss in global productivity annually. Occupational Safety and Health Administration (OSHA) data shows that 25% of workplace accidents stem from language barriers. In customer-facing operations, a PwC study found that 37% of customers leave a brand after just one bad experience, and language barriers are a common culprit.

Real-time speech translation has evolved from a convenient technology to critical business infrastructure. Here’s why global organizations are treating it as essential, not optional.

The Hidden Cost Of Language Barriers In Global Operations

Let’s look at what language barriers actually cost businesses.

Productivity losses: Research by Relay Pro found that 86% of manufacturing professionals feel language barriers reduce their productivity. Bilingual staff spend an average of 4 hours per week translating for colleagues, costing businesses approximately $7,500 per bilingual employee annually.

Safety incidents: Workplace accidents cost the US economy $167 billion per year. Language barriers can contribute directly, with workers potentially misunderstanding hazard warnings and safe operating procedures.

Customer churn: When customers can’t communicate effectively with support teams, frustration can build quickly. The cost of acquiring a new customer is 5-25x higher than retaining an existing one, making language-related churn particularly expensive.

Slower decision-making: 67% of executives point to language-related miscommunication as a source of inefficiency. For global teams, this translates to delayed product launches, missed market opportunities, and competitive disadvantage.

Traditional solutions like human interpreters or hiring native speakers for every market don’t scale. Flying interpreters to events is expensive and logistically complex. Hiring multilingual staff for every region limits talent pools and drives up costs. Businesses needed a different approach. Real-time speech translation emerged as the answer.

How Real-Time Speech Translation Works Today

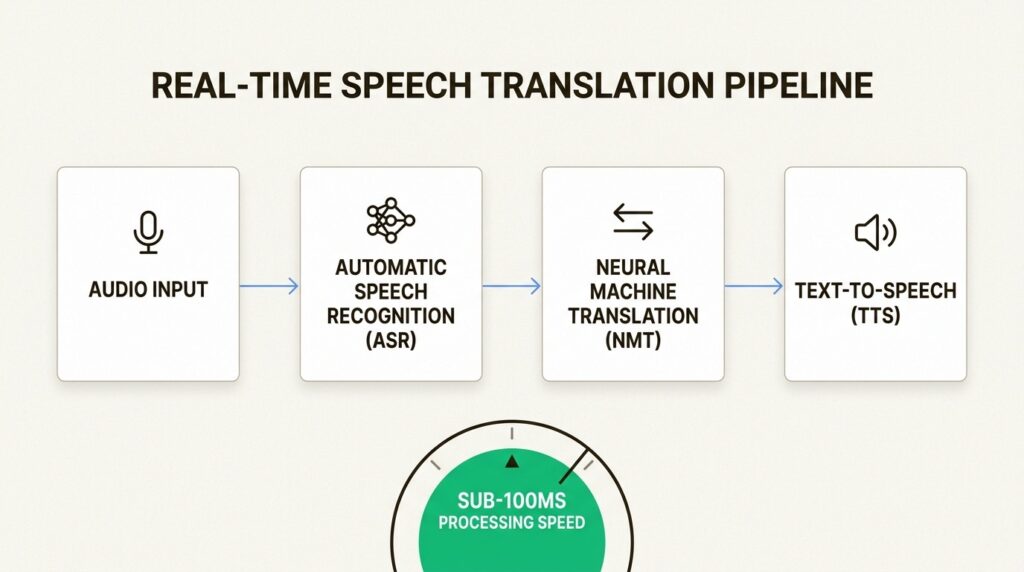

At its core, real-time speech translation combines three technologies: speech recognition, neural machine translation, and voice synthesis.

The process works like this: spoken audio is captured and converted to text through automatic speech recognition (ASR). This text is then translated using neural machine translation (NMT) models trained on vast multilingual datasets. Finally, text-to-speech (TTS) technology generates natural-sounding audio in the target language.

Modern systems achieve this in near real-time, with latencies measured in milliseconds. Shunya Labs delivers streaming ASR with low latency, making conversations feel natural and responsive.

The technology has improved dramatically in recent years. Early translation systems struggled with accents, idioms, and context. Today’s neural networks handle these challenges far better. They can distinguish between formal and informal speech, recognize industry-specific terminology, and even adapt to individual speaking styles.

However, not all translation systems are equal. Generic solutions often fail in specialized contexts. A medical consultation requires different vocabulary than a manufacturing floor conversation. Financial services have their own terminology. This is where specialized models make a difference.

Where Real-Time Translation Delivers Business Value

Real-time speech translation creates value across multiple operational areas. Here’s where organizations see the biggest impact.

Internal collaboration and productivity

Global teams can communicate without friction. Teams can conduct pipeline reviews across regions in real time.

The productivity gains extend beyond meetings. When employees can access training materials in their native language, knowledge transfer accelerates. Companies report that multilingual training programs see higher completion rates and better knowledge retention.

Customer experience and support

Contact centers represent one of the strongest use cases for real-time translation. Instead of hiring native speakers for every language they support, companies can serve customers in their preferred language using AI translation.

The system can capture customer speech, translate it in real time, and allow agents to respond in their own language while the customer hears a natural translation. The result is scalable multilingual support without proportional hiring costs.

The business case is compelling. Customer satisfaction scores improve when people can communicate in their native language. First-call resolution rates increase. Support costs per interaction drop significantly.

Compliance and safety

For regulated industries, language barriers can create compliance risks. Training on safety protocols, legal requirements, and operational procedures must be understood precisely. Miscommunication can lead to violations, accidents, or litigation.

Real-time translation can ensure that training reaches all employees in their native language. Manufacturing facilities can use it to communicate updates instantly across shifts. Healthcare organizations deploy it for patient communications where accuracy matters critically.

Market expansion

Entering new markets traditionally required significant localization investment. Real-time translation reduces this barrier. Companies can test market demand, conduct customer research, and support initial sales without full localization teams.

Events and conferences benefit enormously. Making attendees participate in their preferred language through their own devices.

What To Look For In A Speech Translation Solution

Choosing the right solution requires evaluating several factors beyond basic translation accuracy.

Language coverage that matches your needs

Most providers cover major languages like English, Spanish, Mandarin, and Arabic. But global businesses often need more.

India alone has hundreds of languages and dialects. Code-switching, where speakers mix languages mid-sentence (Hinglish), is common in multilingual regions. Generic translation systems struggle with these patterns.

Deployment flexibility for data sovereignty

Where your data lives matters. Many industries face strict data residency requirements:

| Industry | Data Concern |

|---|---|

| Healthcare | HIPAA compliance, patient privacy |

| Financial Services | Regulatory reporting, transaction records |

| Government | National security, classified information |

| Legal | Attorney-client privilege, discovery |

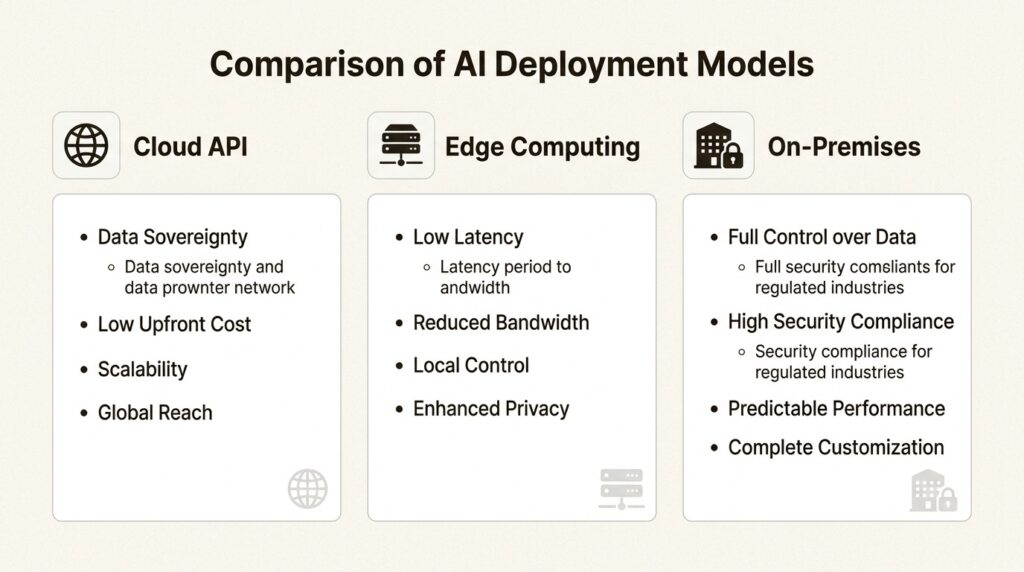

Cloud-only solutions may not work for these use cases. Organizations need options.

Shunya Labs provides cloud, edge, and on device/on-premises deployment options. This flexibility lets organizations meet strict data privacy requirements while still accessing advanced translation capabilities. SOC 2 Type II and ISO 27001 certifications provide the audit trails enterprises require.

Integration and developer experience

Speech translation should fit into existing workflows, not require wholesale system replacement. Look for:

- REST APIs and SDKs in common languages

- WebSocket support for streaming audio

- Pre-built integrations with common platforms

- Voice agent orchestration capabilities

Shunya Labs offers a complete voice AI stack from foundation models to orchestration. Our Voice Agent Orchestration framework includes intent recognition, entity extraction, and sentiment analysis, enabling sophisticated conversational automation beyond simple translation.

Domain-specific accuracy

Generic translation works for general conversation. Specialized domains need specialized models.

| Domain | Specialization Needed |

|---|---|

| Healthcare | Medical terminology, HIPAA compliance |

| Legal | Precise terminology, regulatory language |

| Manufacturing | Technical specifications, safety protocols |

| Finance | Numerical accuracy, compliance language |

| Customer Service | Sentiment detection, empathy |

The future of multilingual business operations

Real-time speech translation is moving from a standalone tool to embedded infrastructure. We’re seeing this evolution in several ways.

AI agent integration: Translation is becoming part of conversational AI systems. Voice agents that can understand and respond in multiple languages will become standard for customer service, sales, and internal support.

On-device processing: For privacy-sensitive applications, translation is moving to edge devices. This eliminates cloud latency and keeps data local. We support edge deployment for exactly these scenarios.

Improved contextual understanding: Neural networks continue to improve at understanding nuance, sarcasm, and cultural context. The gap between human and machine translation quality is narrowing.

Unified communication platforms: Rather than separate translation tools, expect translation to be built into every communication channel: video conferencing, phone systems, chat applications, and email.

The question is no longer whether organizations will adopt real-time translation, but how quickly they can deploy it effectively.

Building voice AI on your terms

Real-time speech translation has become essential infrastructure for global business. The costs of language barriers, measured in lost productivity, safety incidents, and customer churn, far exceed the investment required to solve them.

But not all translation solutions are created equal. Generic cloud APIs work for simple use cases. Organizations with complex requirements, specialized domains, or strict compliance needs require more.

We provide a complete voice AI stack built for these realities. From our Zero STT foundation models to our Vāķ platform delivering real-time speech-to-speech translation across 55 languages and 2,970 translation pairs, we offer the depth that global enterprises require.

Our focus on Indic languages, code-switching support, and flexible deployment options (cloud, edge, or on-premises) addresses the gaps that generic solutions leave open. With SOC 2 Type II and ISO 27001 certification, we meet the security standards that regulated industries demand.

Voice AI on your terms means modular components that integrate with your existing systems. It means choosing where your data lives. It means specialized models for your specific domain. That’s what we’ve built.

If your organization is evaluating real-time speech translation, we’d welcome the conversation. Let’s discuss how to remove language barriers from your global operations.

References

Hale, T. (2023). Training in Native Language Makes Workplaces Safer. [online] Shrm.org. Available at: https://www.shrm.org/in/topics-tools/news/risk-management/training-native-language-makes-workplaces-safer [Accessed 14 Apr. 2026].

Pendell, R. (2022). The World’s $7.8 Trillion Workplace Problem. [online] Gallup.com. Available at: https://www.gallup.com/workplace/393497/world-trillion-workplace-problem.aspx.

PwC (2022). Customer Experience Is Everything. [online] PWC. Available at: https://www.pwc.com/us/en/services/consulting/library/consumer-intelligence-series/future-of-customer-experience.html.

Relay. (2025). The Hidden Costs of Language Barriers in Industrial Environments – Relay. [online] Available at: https://relaypro.com/resources/the-hidden-costs-of-language-barriers-in-industrial-environments/#lead-form [Accessed 14 Apr. 2026].

Rubin, J. peter (2011). Reducing the Impact of Language Barriers. [online] Available at: https://images.forbes.com/forbesinsights/StudyPDFs/Rosetta_Stone_Report.pdf.